Samsung Unveils HBM4E Memory at Nvidia GTC 2026

It’s been just over a month since Samsung announced the mass production of its HBM4 memory chips, but the company isn’t slowing down. The company has now unveiled its next-gen HBM4E memory solution. The chip pushes AI computing performance to the next level with faster speeds.

Samsung’s HBM4E revolutionizes high-bandwidth memory

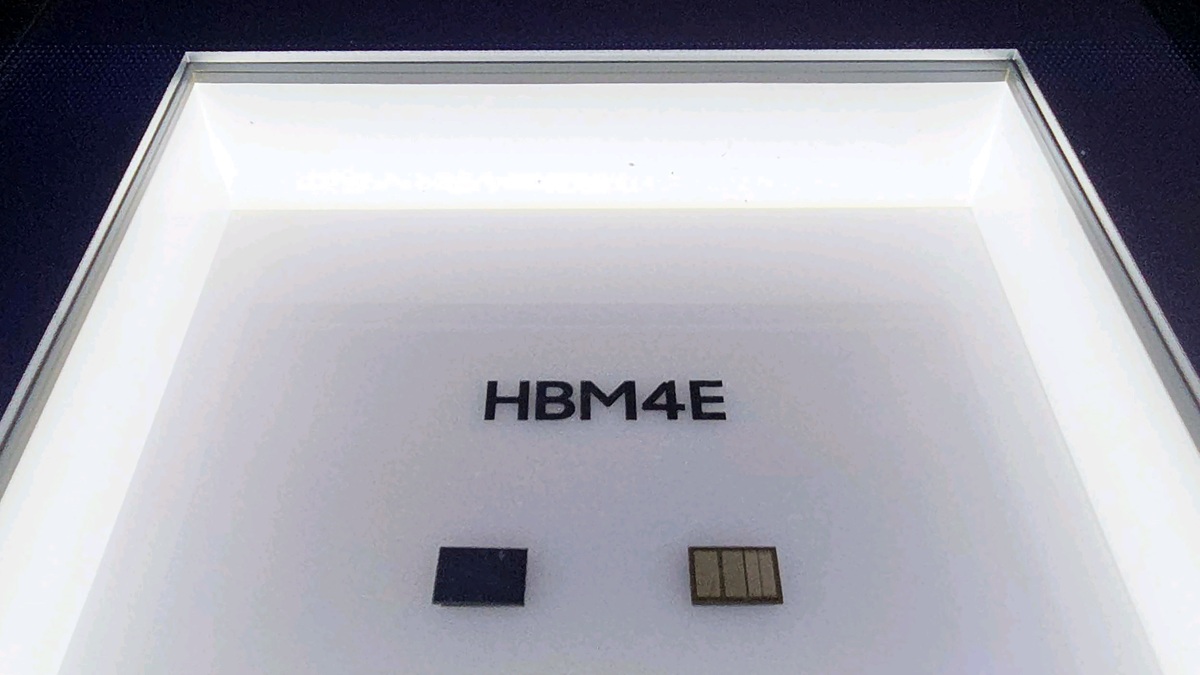

Samsung has showcased its HBM4E technology at Nvidia GTC 2026 (March 16-19). HBM4E offers data transfer rates of 16 gigabits per second (Gbps) per pin and a total bandwidth of 4 terabytes per second (TB/s). This allows AI systems to process massive amounts of data quickly, accelerating the development. Built using in-house sixth-gen 10nm DRAM process, the memory achieves high performance with stable yields.

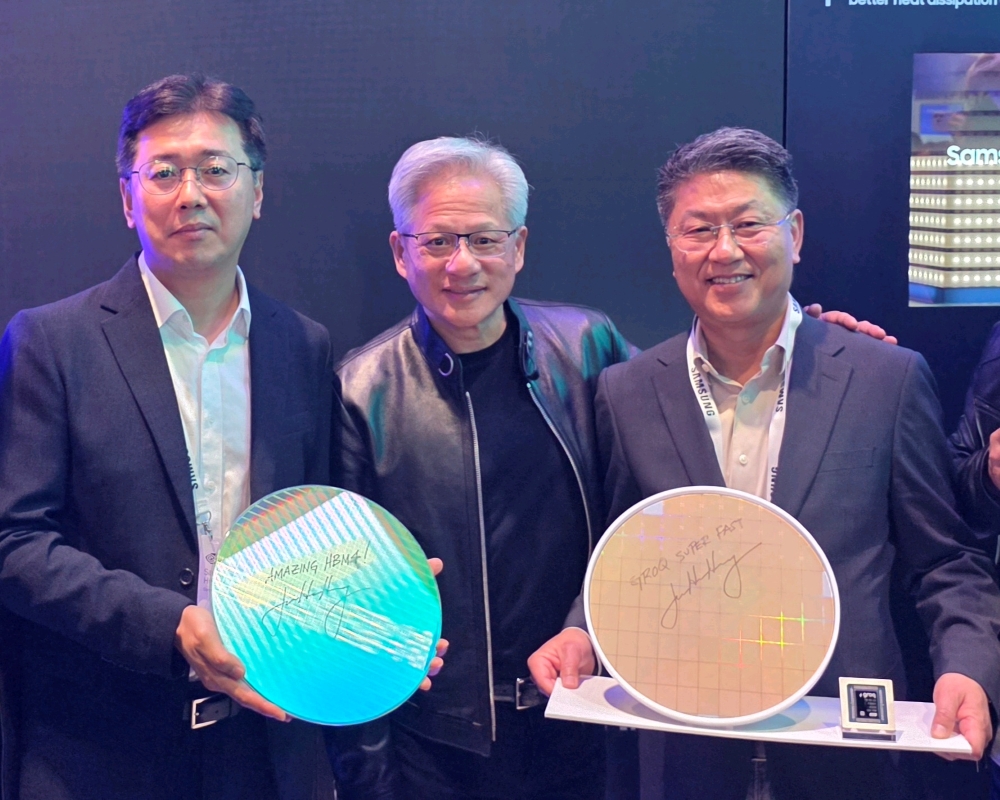

In comparison, HBM4 offers consistent processing speeds of 11.7 Gbps per pin, making HBM4E a substantial leap for AI workloads. At the exhibition, visitors could see HBM4 chips mounted on the Nvidia Vera Rubin platform alongside Foundry 4nm base die wafers. Jensen Huang, CEO of Nvidia, visited the event and signed “Amazing HBM4” on an HBM4 wafer.

Samsung also showcased Hybrid Copper Bonding (HCB) technology at the event, which allows memory stacks of 16 layers or more. The solution also reduces heat resistance by over 20% compared to traditional thermal compression bonding.

Furthermore, Samsung highlighted several AI solutions, such as SOCAMM2 server memory modules and PM1763 SSDs. The former uses low-power DRAM that offers high bandwidth and flexible system integration, while the latter uses the latest PCIe 6.0 interface for fast data transfers and high capacities.

Samsung also shared its future plans for HBM chips. The company plans to use its 1c DRAM process along with a 2nm foundry to make HBM5, expected to debut in about two years. Moreover, it plans to use the more advanced 1d DRAM process with the same 2nm foundry to create HBM5E. These advancements should deliver even faster speed and higher efficiency for future AI systems.