Samsung Launches TRUEBench to Measure Real-World AI Productivity

Samsung has launched TRUEBench (Trustworthy Real-world Usage Evaluation Benchmark), a benchmark platform to evaluate AI productivity. It supports multilingual productivity scenarios to bridge the gaps in existing AI benchmarks. This will help to understand the practical usefulness of AI in businesses around the world.

TRUEBench evaluates LLMs’ performance in real-world workplace productivity applications

AI language models (LLMs) are now integral parts of several tasks, such as writing reports and analyzing data. However, current benchmarks are suboptimal in measuring how well AI performs in real-world situations. Thanks to Samsung Research, the team developed TRUEBench as a comprehensive evaluation framework for real-world LLM applications.

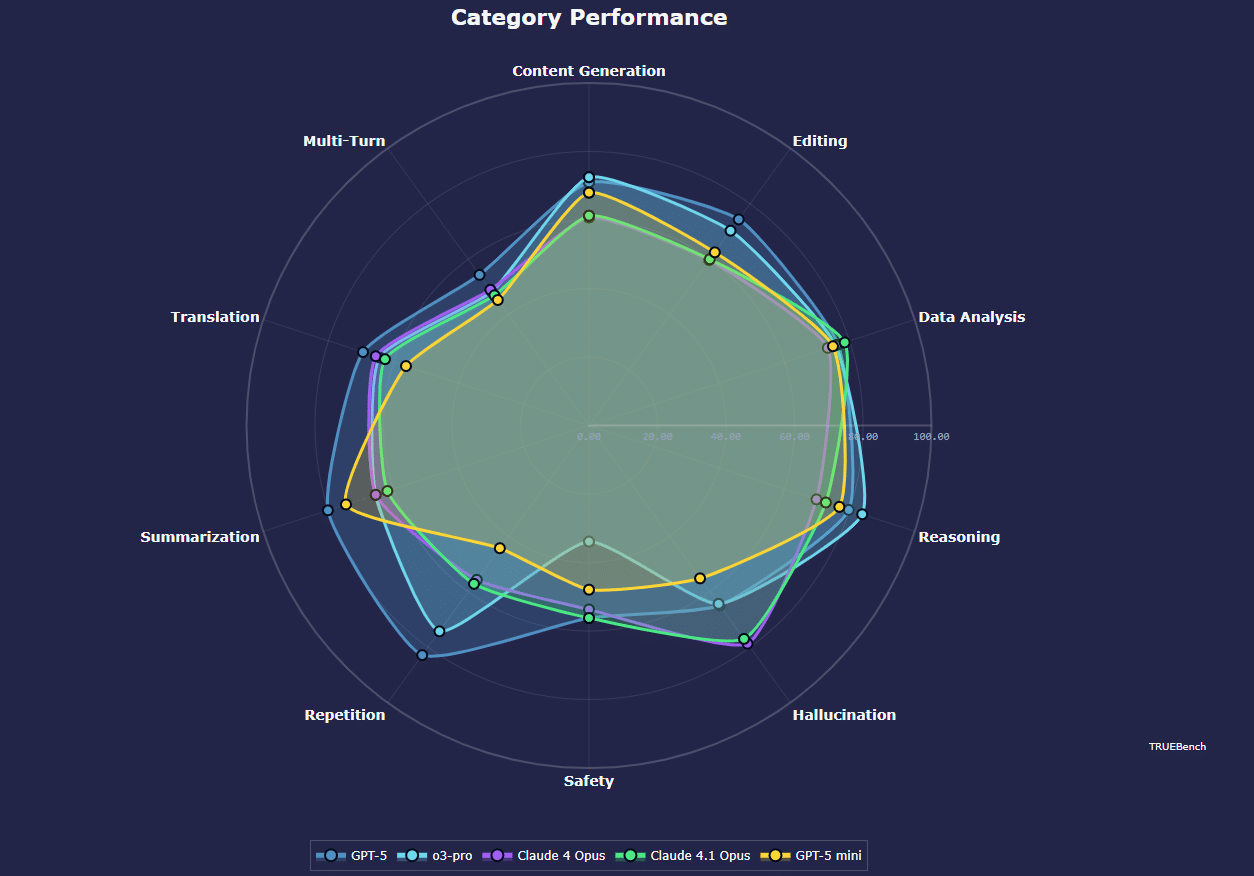

For realistic evaluation, TRUEBench includes a set of metrics along with diverse dialogue scenarios and multilingual conditions. Based on Samsung’s experience using AI for work, the benchmark platform tests common business tasks like content generation, data analysis, summarization, and translation. It covers 10 main categories and 46 sub-categories. It uses AI-powered automatic scoring, with evaluation rules designed and refined by both humans and AI.

“Samsung Research brings deep expertise and a competitive edge through its real-world AI experience,” said Paul (Kyungwhoon) Cheun, CTO of the DX Division at Samsung Electronics and Head of Samsung Research. “We expect TRUEBench to establish evaluation standards for productivity and solidify Samsung’s technological leadership.”

Samsung says that existing benchmarks mainly focus on overall performance and test single-question answers. However, the Korean firm’s solution includes 2,485 test sets across 10 categories and 12 languages, supporting cross-linguistic scenarios. The test sets range from short requests (8 characters) to long requests (over 20,000 characters).

TRUEBench data and leaderboards are available on the open-source platform Hugging Face. Users can compare up to five AI models simultaneously to analyze their performance at a glance. Furthermore, the platform shows the average response length for comparison of both performance and efficiency. If you want to learn more about TRUEBench, visit its page on the Hugging Face portal.